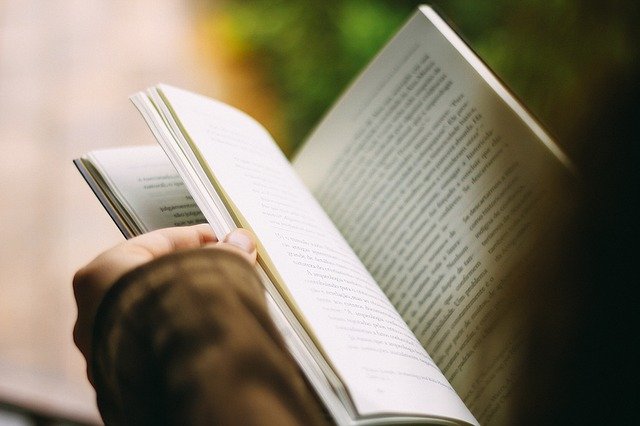

Inside the beating heart of many students and a large number of learners lies an inner cheat. To get passing grades, every effort will be made to do the least to achieve the most. Efforts to subvert the central class examination are the stuff of legend: discreetly written notes on hands, palms and other body parts; secreted pieces of paper; messages concealed in various vessels that may be smuggled into the hall.

In the fight against such cunning devilry, vigilant invigilators have pursued such efforts with eagle eyes, attempting to ensure the integrity of the exam answers. Of late, the broader role of invigilation has become more pertinent than ever, notably in the face of artificial intelligence technologies that seek to undermine the very idea of the challenging, individually researched answer.

ChatGPT, a language processing tool powered by comprehensive, deep AI technology, offers nightmares for instructors and pedagogues in spades. Myopic university managers will be slower to reach any coherent conclusions about this large language model (LLM), as they always are. But given the diminishing quality of degrees and their supposed usefulness, not to mention running costs and the temptations offered by educational alternatives, this will come as a particularly unwelcome headache.

For the student and anyone with an inner desire to labour less for larger returns, it is nothing less than a dream, a magisterial shortcut. Essays, papers, memoranda, and drafted speeches can all be crafted by this supercomputing wonder. It has already shaken educational establishments and even made Elon Musk predict that humanity was “not far from dangerously strong AI.”

Launched on November 30, 2022 and the creative offspring of AI research company OpenAI, ChatGPT is a work in progress, open to the curious, the lazy and the opportunistic. Within the first five days of launching, it had 1 million users on the books.

It did not take long for the chatbot to do its work. In January, the Manchester Evening News reported that a student by the name of Pieter Snepvangers had asked the bot to put together a 2,000-word essay on social policy. Within 20 minutes, the work was done. While not stellar, Snepvangers was informed by a lecturer that the essay could pass with a grade of 53. In the words of the instructor, “This could be a student who has attended classes and has engaged with the topic of the unit. The content of the essay, this could be somebody that’s been in my classes. It wasn’t the most terrible in terms of content.”

On receiving the assessment from the lecturer, Snepvangers could only marvel at what the site had achieved: “20 minutes to produce an essay which is supposed to demonstrate 12 weeks of learning. Not bad.”

The trumpets of doomsday have been sounded. Beverly Pell, an advisor on technology for children and a former teacher based in Irvine, California, saw few rays of hope with the arrival of the ChatGPT bot, notably on the issue of performing genuine research. “There’s a lot of cheap knowledge out there,” she told Forbes. “I think this could be a danger in education, and it’s not good for kids.”

Charging the barricades of such AI-driven knowledge forms tends to ignore the fundamental reality that the cheat or student assistance industry in education has been around for years. The armies of ghost writers scattered across the globe willing to receive money for writing the papers of others have not disappeared. The website stocked with readily minted essays has been ubiquitous.

More recently, in the wake of the COVID-19 pandemic, online sites such as Chegg offer around the clock assistance in terms of homework, exam preparation and writing support. The Photomath app, to take another striking example, has seen over 300 million downloads since coming into use in 2014. It enables students to take a picture of their maths problems and seek answers.

Meta’s chief AI scientist Yann LeCun is also less than impressed by claims that ChatGPT is somehow bomb blowing in its effects. “In terms of underlying techniques, ChatGPT is not particularly innovative.” It was merely “well put together” and “nicely done.” Half a dozen startups, in addition to Google and Meta, were using “very similar technology” to OpenAI.

It is worth noting that universities, colleges and learning institutions constitute one aspect of the information cosmos that is ChatGPT. The chatbot continues to receive queries of varying degrees of banality. Questions range from astrology to suggest gift ideas to friends and family.

Then come those unfortunate legislators who struggle with the language of writing bills for legal passage. “When asked to write a bill for a member for Congress that would make changes to federal student aid programs,” writes Michael Brickman of the American Enterprise Institute, “ChatGPT produced one in seconds. When asked for Republican and Democrat amendments focused on consumer protection, it delivered a credible version that each party might conceivably offer.”

What are the options in terms of combating such usurping gremlins? For one, its gratis status is bound to change once the research phase is concluded. And, at least for the moment, the website has a service that occasionally overloads and impairs responses to questions users may pose. To cope with this, OpenAI created ChatGPT Plus, a plan that enables users to access material even during those rocky fluctuations.

Another relevant response is to keep a keen eye on the curriculum itself. In the words of Jason Wingard, a self-professed “global thought leader”, “The key to retaining the value of a degree from our institution is ensuring your graduates have the skills to change with any market. This means that we must tweak and adapt our curriculum at least every single year.” Wingard’s skills in global thought leadership do not seem particularly attuned to how university curricula, and incompetent reformers who insist on changing them, function.

There are also more rudimentary, logistical matters one can adopt. A return to pen and paper could be a start. Or perhaps the typewriter. These will be disliked and howled at by those narcotised by the screen, online solutions and finger tapping. But the modern educator will have to face facts. For all the remarkable power available through AI and machine related learning, we are also seeing a machine-automated form of unlearning, free of curiosity. Some branches off the tree of knowledge are threatening to fall off.

Dr. Binoy Kampmark was a Commonwealth Scholar at Selwyn College, Cambridge. He currently lectures at RMIT University. Email: [email protected]