Earlier this month, the lower house of the Brazilian legislature adopted a controversial “fake news bill” (Bill 2639) which resulted in opposition from major tech firms like Google. The debate surrounding the Bill has once again foregrounded the issue of online content moderation by governments around the world.

Brazil’s Bill 2639 has emerged from a context of increasing violence perceived to be caused by material shared on social media. Post Jair Bolsonaro’s defeat in the 2022 general election, the discourse of election fraud circulating online has led to rioting in the country as well (the parallels with Trump’s loss in the U.S. in 2020 are glaring). Many believe that the spread of misinformation and unregulated speech on social media is translating into violence and other negative activities on the ground, which explains the backing of the bill by many people in the country including its lawmakers.

This is not to say that it is unchallenged. Evangelical and conservative lawmakers are opposing the Bill while tech majors like Google and Meta are campaigning earnestly against it, which, predictably, has irritated the authorities. The country’s government and judiciary have forced Google and Telegram to remove messages on their platforms seeking to turn public opinion against the bill, viewing it as a ‘subversion’ of democracy.

The provisions of the Bill hold internet platforms like Google and Meta liable for seeking out and removing the content it defines as “illegal”, with hefty fines and criminal prosecution as penalties. If passed, it is poised to be one of the “strongest” laws on social media in the world. However, there is no date for the Bill to be put to vote yet since the previously announced date of May 2, 2023, fell through.

Should the state remove online ‘misinformation’?

The problem of misinformation and hate speech is by no means trivial. It affects personal and community relationships and can harm electoral integrity. It enables the targeting of marginalized sections and prominent individuals from among them. It distorts people’s views on science and history, creating debates and controversies whose real intent is not to further legitimate inquiry but to foment anger and dissatisfaction. Misinformation and hate speech are phenomena that must be understood against a backdrop of sociopolitical and economic reality. Involving the government to remove it when it appears or punishing platforms for not proactively seeking out and banning prohibited content is a quick fix that creates more problems than it solves.

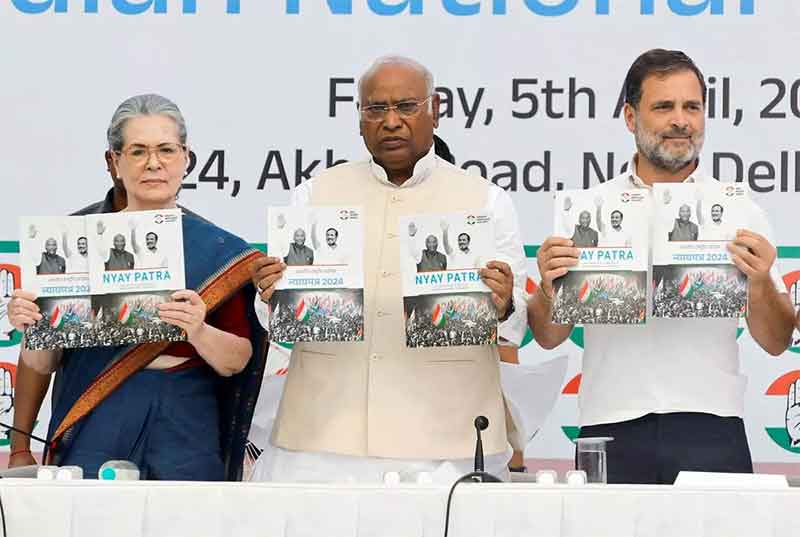

This is not limited to Brazil and includes all countries in the world with laws on online content moderation. The call for accountability against hate speech and false information begs the question of who is the one holding these tech platforms accountable. Handing this power entirely to the government is problematic. It may arbitrarily decide how to interpret acts of expression as ‘hate speech’ and ‘misinformation’. Its decision-making and redressal process may not be independent or transparent. The ruling government can give its own propaganda machine a free run on social media while censoring its opponents. The measures it takes to combat misinformation may not always be proportionate. And finally, why should it be assumed that the government itself never disseminates ‘misinformation’?

There are many situations where the term ‘mis/disinformation’ can be weaponized to protect the official propaganda against counter-narratives: such as abuses by security forces in regions of occupation and conflict. The power balance in these places between the state and the local population is somehow improved by the availability of the internet, smartphones, and social media which allows them to disseminate their own viewpoints, experiences, and documentation of events. In such situations, anything that goes against the grain of the official narrative or its permitted variations can be labeled as ‘disinformation’ and criminalized. In this context, the platforms that host such content and the users that engage with it can be targeted by saying that the alleged disinformation has a ‘national security’ link.

The concept of ‘misinformation’ can jeopardize internet access itself. Since the internet is the medium for alleged ‘misinformation’ or “rumours”, shutting it down is justified in the name of stopping these from spreading. With the highest number of internet shutdowns in the world, India has repeatedly sought to stave off protests, demonstrations, and popular mobilization by shutting down the internet in the name of law and order, and security.

Does this mean that all online content is okay?

There are categories of material that should not be allowed online, but the act of defining it is invariably an intervention in the social, cultural, and political life of the country and must be based on the broadest possible consensus.

Content like child sexual abuse, or weapon-making, for example, are easy to place on the list. But this does not automatically validate all measures taken in the name of a ‘safe’ or ‘accountable’ internet. Criticisms of the UK’s ‘Online Safety Bill’, India’s IT Rules, 2021, France’s 2018 law against “manipulation of information”, and other such laws have overlapping concerns of overreach and vagueness. ‘Misinformation’ can easily be used to protect the government from criticism, such as in India, where there is to be a body that monitors the internet for incorrect facts being disseminated about the government, and controlled by the government itself. In the same way, the Indian government has placed itself at the top of a ‘grievance redressal’ mechanism through which claims to hold social media “accountable”.

Minimizing government interference in online content moderation should be a common goal for those who value a free and open internet. This also means that the phenomena of hate speech, fake news, and trolling, must be countered by exploring alternatives and critically examining the structure of online platforms itself, including the market incentives to maximize users’ screen time and engagement by promoting content that is likely to provoke anger or other negative emotions.

Further reading

Online content moderation is a multi-faceted topic that I explored here from the lens of rights and freedoms exercised in digital spaces. Here are some other resources that I recommend:

- Troll armies, a growth industry in the Philippines, may soon be coming to an election near you: On pro-ruling party propaganda spread through ‘troll armies’ in the Philippines [2019]

- How UK’s Online Safety Bill fell victim to never-ending political crisis: On conflicting interests and demands in London’s “landmark” tech legislation [2023]

- Jade Lyngdoh On Content Moderation In Non-English Languages | Meta India Tech Scholars 2021-22: The need for better moderation for non-English, “regional” languages

- Finding 404: A Report On Website Blocking In India: A report by advocacy group SFLC.in

Arjun Banerjee is a writer and journalist.