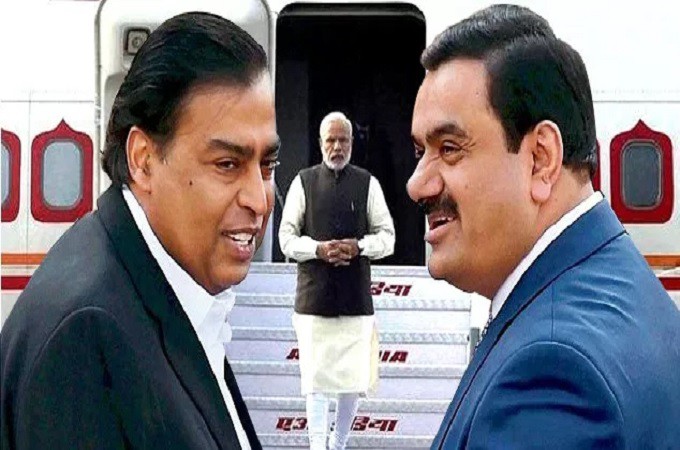

As Bollywood stars Sara Tendulkar and Katrina Kaif become victims of the emerging threat known as “Deepfakes,” the issue of fake news resurfaces as a substantial global menace. It is imperative to address this issue.

The challenge of fake news and the significance of comprehensive fact-checking have long been concerns within the media landscape, predating the advent of AI technologies. What we confront today is merely a novel obstacle to surmount.

Traditionally, reputable news platforms have relied on the labor-intensive efforts of editors and moderators to manually verify news content. However, given the transient nature of news stories, conducting exhaustive authenticity checks is not always a feasible endeavor.

The proliferation of counterfeit content

The issue of fake content extends beyond textual news to encompass visual material. With companies and creators becoming more active on social media, sharing an increasing number of photos and videos, the prevalence of deepfakes and other manipulated visual and audio representations of individuals has seen a notable rise.

The realm of content verification and source authentication offers ample opportunities for innovative technological solutions and ambitious startups. Nevertheless, recent advancements in video translation and dubbing services, exemplified by Google’s Universal Translator featuring lip-sync capabilities, have raised concerns regarding content integrity. Nonetheless, with the emergence of contemporary AI-powered translation and dubbing services like vidby, the potential exists to eliminate human intervention and deliberate message distortion, thereby providing a more dependable solution.

Video and audio synthesis technologies have existed since the late 1990s, with advancements in voice processing and computer graphics playing pivotal roles in their evolution. Modern generative AI technologies have made the creation of deepfakes more accessible, even to those lacking technical expertise. Deepfakes have been employed for purposes extending beyond entertainment or nuisance value; they have been used for malicious intent. For instance, several years ago, Symantec uncovered instances of large-scale fraud where deepfake audio was employed to impersonate company executives, directing employees to transfer funds to specified accounts.

One of the principal challenges associated with deepfakes is the absence of adequate legislation in many countries governing their creation and removal. As deepfakes become increasingly prevalent and lifelike, the responsibility for their responsible development and future expectations remains uncertain.

Exploring the Potential and Hurdles

Deepfake technology has made remarkable progress, enabling the creation of videos with astonishing realism. Some of the notable capabilities include:

- Face Swapping: Deepfakes can seamlessly replace an individual’s face in an existing video with the visage of another person.

- Speech Synthesis: This technology excels at replicating a person’s voice with remarkable precision.

- Lip Sync: Deepfakes can convincingly manipulate the lip movements of a person in a video, making them appear to say things they never actually uttered.

- Real-Time Deepfakes: Recent advancements have ushered in real-time deepfake generation, further elevating the technology’s potential.

Nonetheless, these capabilities are accompanied by a set of substantial challenges:

- Ethical Dilemmas: Deepfake technology raises profound ethical concerns concerning privacy, consent, and the potential for exploitation.

- Misuse: Deepfakes can be exploited to create fabricated news, revenge pornography, and instances of cyberbullying.

- Security Vulnerabilities: The capacity to replicate voices or faces carries inherent security risks, as it can be employed for impersonation.

- Legal Complexities: The legal framework often lags behind technology, rendering it challenging to address issues stemming from the creation and dissemination of deepfakes.

Advancements in deepfake detection technologies

The growing sophistication of deepfake technology has been recognized as a significant cybersecurity threat in recent years. Concurrently, the technology used to identify and expose deepfakes is also evolving.

Various organizations, including DARPA, Microsoft, and IBM, have been developing technologies to distinguish fake content from authentic content. Deepfake software often falls short in replicating intricate details. Focusing on specific aspects, such as the presence of shadows around the eyes, overly smooth or wrinkled skin, unrealistic facial hair, fabricated moles, and unnatural lip color, can be effective in identifying deepfakes.

Startups are exploring innovative solutions to address this challenge. For instance, some offer technology that embeds a digital signature into content, similar to a watermark, to authenticate original photos and videos. This solution has received a positive response from large insurance companies, suggesting that similar technology may soon become commonplace in cameras and mobile phones to ensure content authenticity.

The tools tame DeepFake

Many deepfake software programs are open-source, enabling both the creation and detection of deepfakes.

As deepfake technology continues to advance, so will the methods for exposing and countering them. The ongoing development of these technologies underscores the need for sustained vigilance and innovative solutions to address the ever-evolving challenges posed by deepfakes.

Despite the progress made in deepfake detection and prevention, there is much work that remains. The lack of established legislation concerning the creation and dissemination of deepfakes remains a significant obstacle. As synthetic videos and audios become more realistic and prevalent, the urgency for such legislation grows.

Ethical considerations are also crucial when dealing with these technologies. While AI holds immense potential to revolutionize various industries and enhance our lives, its misuse can have severe consequences. Therefore, the safe and ethical development of AI technologies, including deepfakes, must be a top priority.

The Final thoughts

Research institutions, tech companies, and policymakers must collaborate to address new challenges. While technology provides some of the tools needed to detect and prevent the misuse of AI, education and public awareness are equally vital in mitigating the impact of deepfakes. People must be informed about the existence of deepfakes and their potential for manipulation. Moreover, they need to be equipped with the skills to assess the content they encounter or apply critical thinking to the information they read and watch.

Mohd Ziyauallah Khan is a freelance content writer based in Nagpur. He is also an activist and social entrepreneur, co-founder of the group TruthScape, a team of digital activists fighting disinformation on social media.